(How Long) Do Pictures Still Count as Evidence?

A study by the Bonn-Rhein-Sieg University of Applied Sciences shows that editorial teams suffer from time pressure in the fight against deepfakes and manipulated images. Technical tools alone are not enough to check the authenticity of image material.

A striking example from March 2022 illustrates this problem: the news channel WELT supplemented a conversation from Ukraine with video footage that allegedly showed helicopters being shot down in Ukraine. However, the footage actually came from a computer game. This example illustrates how manipulated image and video content can find its way into the media and undermine the credibility of reporting.

Increase in disinformation

During the Covid-19 pandemic and the war in Ukraine, the amount of disinformation, i.e. deliberately disseminated false information, has increased significantly, explains Joscha Weber, head of Deutsche Welle’s fact check team. According to the RedaktionsNetzwerk Deutschland, never before has a modern war produced such a flood of unverifiable images. ZDF also describes how the first few weeks after the attack were characterized by an unclear news situation and a flood of videos from social networks. Elliot Higgins, founder of the research network Bellingcat, now sees disinformation as an important part of the foreign policy of authoritarian states and speaks of an “age of information wars”.

Against this backdrop, the question arises as to how editorial offices can ensure the authenticity of audiovisual material. A master’s thesis at the Bonn-Rhein-Sieg University of Applied Sciences examined this question and conducted seven interviews with professionals from academia and editorial practice.

Today’s flood of images on social media makes it possible to document events such as the Arab Spring, the Russian invasion of Ukraine or the current situation in the Gaza Strip. This freely available material helps to reconstruct and and provide evidence for incidentssuch as the shooting down of the MH17 passenger plane. At the same time, technological developments in recent years have made it easier, cheaper and faster to produce authentic-looking manipulations.

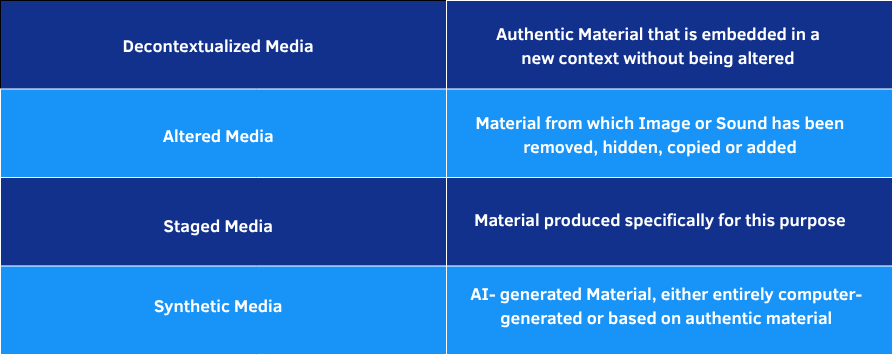

Various forms of manipulation

Ten years ago, the manipulation of video material, apart from simple operations at image level, was only possible in the context of film productions. Today, things are different: AI-generated media, known as synthetic media or deepfakes, are ubiquitous. Examples of how AI-generated images reach the public and influence the perception of current events are becoming increasingly common.

However, the evaluation of the interviews conducted shows that although synthetic media is potentially the most perfect form of manipulation, it hardly plays a role in everyday editorial work. Instead, the distribution of decontextualized content is currently seen as the greatest challenge.

I really think deceptive context is the biggest challenge overall, much more than manipulation so far. There's a big discussion about deepfakes, but they don't play a big role in our research yet.

- Johanna Wild, research network Bellingcat

Forms of manipulation – an overview

Potentially perfect: synthetic media

The potential of synthetic media is considerable. While decontextualized media can usually be proven to have been used in a different context at an earlier point in time, perfect manipulation, as is potentially possible with the help of artificial intelligence (AI), cannot be falsified. „It is technically difficult to detect deepfakes. It is even more difficult to say with certainty that this is not a deepfake. You have to keep looking,“ explains Jutta Jahnel from the Karlsruhe Institute of Technology.

For a convincing result in high quality, you currently need a lot of training data, about a day for learning and manual post-processing to disguise the transitions between authentic and AI-generated material, explains Dominique Dresen, deepfake expert at the Federal Office for Information Security (BSI). For results with a lower resolution, however, the effort is already significantly lower under good initial conditions.

The effort is made even more manageable by the possibility of generating images completely synthetically from a text description, as is possible with DALL-E, Midjourney and similar technologies. IT forensic experts such as Sascha Zmudzinski from the Fraunhofer Institute for Secure Information Technology (SIT) and Christian Riess, group leader for multimedia security at FAU Erlangen-Nürnberg, see these developments as a major challenge and threat.

We have this classic idea: an image is a physical measurement in principle of photons that were traveling in the direction of my camera. And you can forget that. That no longer exists.

- Christian Riess, FAU Erlangen-Nürnberg

The rapid development of the technology is demonstrated by the example of Sora. OpenAI presented the AI tool in February 2024, which already appears to be able to create authentic videos of up to 60 seconds in length from text instructions.

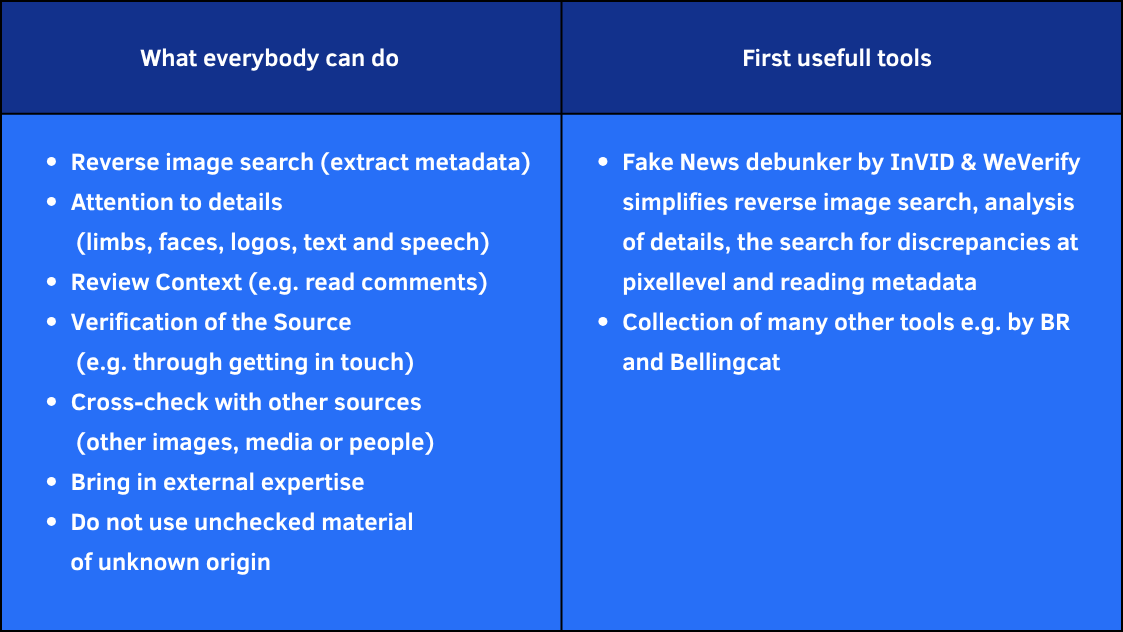

No purely technical solution possible

The experts interviewed disagree on the extent to which (automated) detection systems can help with the verification of audiovisual material. However, they agree that there will be no purely technical solution. It will no longer be possible to prove the authenticity of the material, but only the plausibility of the information it contains. And this will still require people who are able to recognize audiovisual manipulations. And that takes time. „Tools don’t help us if we don’t have time to deal with them. And that is often the crux of the matter,“ says Johanna Wild from the „Bellingcat“ research network:https://de.bellingcat.com.

A look into practice: Research approaches and tools for journalists

Just as there is no single problem-solving tool, there is no single approach to verifying audiovisual content. Instead, experts suggest answering five key questions to structure the verification process:

1. Do we see the original version? (originality)

2. Do we know who made it? (source)

3. Do we know where it was made? (location)

4. Do we know when it was made? (date)

5. And do we know why it was made? (Motivation)

Both traditional journalistic research and multimedia forensics methods help to answer these questions. Multimedia forensics is a branch of computer science that deals with the technical possibilities for determining the origin and authenticity of audiovisual media.

Tabelle: Martha Peters

“What is real at all?”

Regardless of the problems caused by manipulated image and sound material, several experts highlighted a risk in the interviews that they sometimes consider more dangerous than the manipulation itself. They refer to it as the “Liars Dividend”. This term describes the fact that the possibility of deceptive manipulation means that everything is seen as potentially manipulated, including authentic content.

This uncertainty, that everything is seen as potentially false or manipulated, is a major problem for society. And it could happen that actors at all possible levels have an interest in destabilizing societies and actively influencing narratives.

- Jochen Spangenberg, Deputy Head of DW Research and Cooperations Projects

There is a lot to be done everywhere – in technical and regulatory terms, inside and outside editorial offices and in terms of the media literacy of recipients. One possible approach could be to no longer keep up with the flood of content on the internet, but to create editorial added value through reliable information and absolute source transparency.

The study

The results presented are based on a study that Martha Peters presented in July 2023 as part of her master’s thesis in the Master‘s programme Technology and Innovation Communication at Bonn-Rhein-Sieg University of Applied Sciences. The study sheds light on the current state of manipulation and verification possibilities, describes and evaluates existing problems and possible solutions for editorial offices.

The work was awarded the Förderpreis Informationskompetenz 2025

First supervisor: Prof. Dr. Susanne Keil

Second supervisor: Prof. Dr. Tanja Köhler

Share this article via:

Martha Rebekka Peters

Martha Rebekka Peters, born in Bonn in 1997, prefers to spend her free time in the vertical – whether in the climbing gym or on the rocks. She has also been a youth leader for many years. However, she now spends at least as much time at party conferences as she does in the mountains: as a freelancer for phoenix, she accompanies political events and increasingly deals with targeted disinformation – especially when dealing with populist parties. This challenge gives her the opportunity to put her passion for facts, journalistic diligence and in-depth research to good use.